ASO-Strategist: Fine-Tuned Model for App Store Optimization

A 3B-parameter fine-tuned model specialized for App Store Optimization, trained on 1,000 curated app description examples using Apple MLX framework on Apple Silicon.

ASO-Strategist

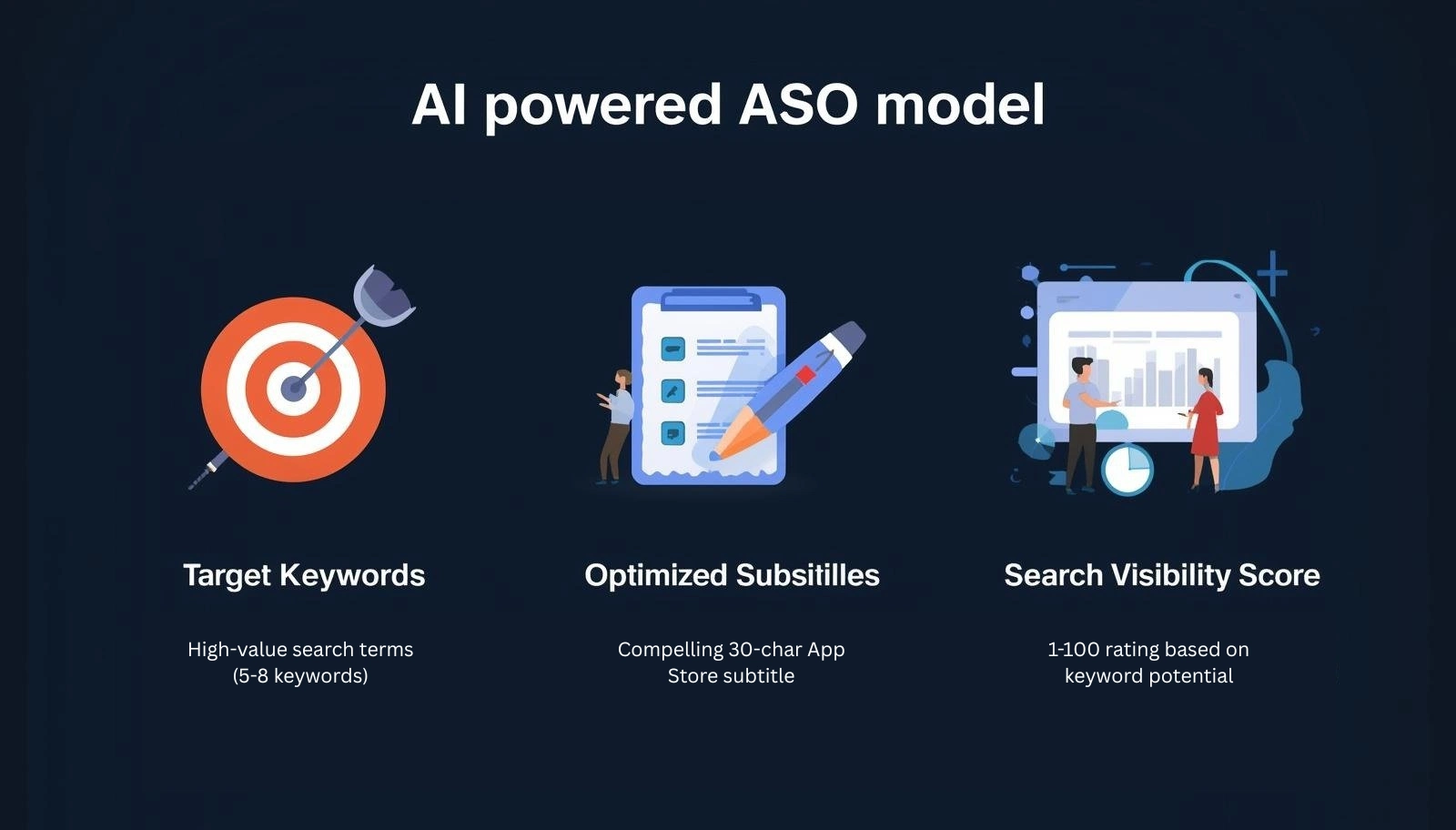

ASO-Strategist generates high-value App Store Optimization (ASO) outputs: target keywords, optimized subtitles, and visibility scores. General-purpose language models lack domain-specific training data for this task. This model fills that gap with specialized fine-tuning on 1,000 real-world ASO examples.

🔍 Problem

General-purpose language models produce generic, platform-incompatible ASO suggestions. App Store Optimization requires understanding specific constraints: 30-character subtitle limits, keyword density trade-offs, platform-specific ranking signals, and category-level competition. Without domain-specific fine-tuning, models default to keyword suggestions that are too broad, too generic, or structurally invalid for App Store submission.

🛠️ Architecture & Training

Base Model: Llama 3.2 3B Instruct

Framework: Apple MLX on Apple Silicon

Technique: LoRA (Low-Rank Adaptation)

Training Data: 1,000 curated app description examples with structured ASO outputs

Output Format: JSON with keyword lists, subtitle suggestions, visibility scores

LoRA kept compute requirements feasible on consumer hardware while targeting layers most relevant to structured output generation. Base model selection prioritized instruction-following capability for consistent JSON output.

📊 Capabilities

The model transforms app descriptions into three structured components:

Keywords (5-8 terms): High-value search terms ranked by estimated relevance

Subtitles: Multiple options within the 30-character App Store limit

Visibility Score (1-100): Estimated keyword competitiveness rating

Example input: “A meditation app with guided sleep stories, breathing exercises, and daily mindfulness reminders for stress relief”

Example output: Structured JSON with 7 keyword suggestions, 3 subtitle options, and visibility score of 78.

🛡️ Limitations

- Trained on synthetic data, not real App Store performance metrics

- English language only (multilingual support planned)

- Subtitle recommendations occasionally exceed 30 characters

- Keywords reflect general patterns, not real-time App Store data

- Not a substitute for professional ASO analysis and market research

See the official Hugging Face documentation for complete technical details.

🚀 Quick Start

Hugging Face

Access the model and documentation

Ollama

ollama pull fahidnasir/ASO-Strategist && ollama run fahidnasir/ASO-StrategistPython

from mlx_lm import load, generate

model, tokenizer = load("fahidnasir/ASO-Strategist")

generate(model, tokenizer, "A meditation app with guided sleep stories, breathing exercises, and daily mindfulness reminders for stress relief")💡 Key Takeaways

- Data quality determines output quality — 1,000 well-structured examples outperform larger noisy datasets for domain-specific tasks.

- Base model instruction-following matters — models that reliably follow structured output prompts reduce post-processing overhead.

- Domain constraints must be encoded in training data — the 30-character subtitle limit requires enforcement across training examples.

- Multiple training iterations are expected — output quality requires several cycles of data refinement and validation.

- Synthetic training data has measurable limits — validation against live App Store data is essential before production deployment.