I Trained My First AI Model

Built and published ASO-Strategist, a fine-tuned language model for App Store Optimization using LoRA on Apple's MLX framework.

I Trained My First AI Model

I built and published ASO-Strategist, a fine-tuned language model for App Store Optimization using LoRA on Apple’s MLX framework. Trained on 1,000 curated examples, it’s available on Hugging Face, Ollama, Kaggle, and Weights & Biases. This post covers the data pipeline, training decisions, and lessons from publishing across four platforms.

🔍 The Problem: Generic Models for Specialized Work

General-purpose LLMs produce generic ASO advice because they lack domain-specific training data. Most don’t understand app categories, keyword competition, or conversion psychology. App Store Optimization requires understanding user behavior, market trends, and creative strategy—all things that demand specialized models.

🛠️ Approach: Data, Training, Distribution

Phase 1: Data Pipeline (2 weeks)

The breakthrough insight: focused, high-quality data beats massive generic datasets.

I created 1,000 carefully curated examples, each containing:

- Real app descriptions

- Target keywords for different categories

- Optimized 30-character subtitles

- Visibility scores (1-100 rating)

This took longer than writing code, but the quality of training data determines model quality.

Phase 2: Training Stack (1 week)

I had to learn three new tools:

- MLX Framework: Apple’s ML framework optimized for Silicon Macs. Significantly faster than PyTorch for fine-tuning on M1/M2/M3 chips.

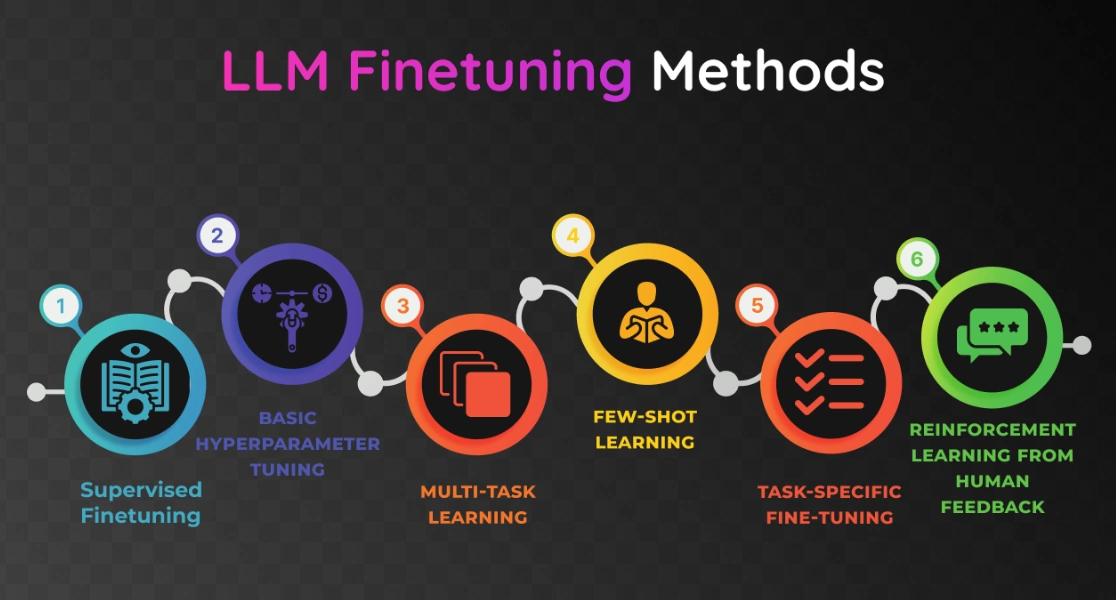

- LoRA Fine-tuning: Low-Rank Adaptation. Instead of retraining the entire model, LoRA adds small adapter layers. Dramatically reduces compute requirements while maintaining quality.

- Model Quantization: Converts 32-bit weights to 4-bit or 8-bit. Reduces model size by 75% with minimal accuracy loss.

Phase 3: Build and Validate

The training process revealed specific challenges:

- Data Quality: Each example had to be realistic and internally consistent. Bad examples teach the model bad patterns.

- Training Stability: Hyperparameter tuning—learning rate, batch size, epochs—to prevent overfitting or divergence.

- Output Format: The model needed to produce consistent JSON output with keywords, subtitle, and reasoning. I used structured prompts and validation in post-processing.

- Performance: Validation loss dropped to 0.320 after convergence, indicating the model learned meaningful patterns.

📊 Results: Publishing and Impact

I distributed the model across four platforms to reach different audiences:

Hugging Face

The primary model hub. Includes comprehensive documentation, training metrics, and inference examples.

Ollama

For developers who want one-command local setup:

ollama pull fahidnasir/ASO-Strategist

ollama run fahidnasir/ASO-StrategistKaggle

Data scientists can experiment with the model directly in notebooks.

Weights & Biases

ML engineers can inspect training metrics, experiments, and model performance.

Model Capabilities

ASO-Strategist takes any app description and outputs:

- Target Keywords: 5-8 high-value search terms ranked by competition and relevance

- Optimized Subtitle: A compelling 30-character subtitle

- Search Visibility Score: 1-100 rating based on keyword potential

- Strategic Reasoning: Explanation of keyword choices for that app category

Future Versions

I’m planning improvements based on user feedback:

- 8K context window for longer app descriptions

- Multilingual support for global ASO strategies

- Real-time App Store data integration for trending keywords

- Category-specific optimization for different app types

💡 Key Takeaways

- Data quality trumps quantity: 1,000 hand-curated examples outperformed generic fine-tuning datasets. Spend time on data curation.

- Specialized models solve real problems: A 7B LoRA adapter trained on domain data outperforms a 70B generic model on niche tasks.

- LoRA is a game-changer: Fine-tuning specialized models is now accessible on consumer hardware. No need for massive compute clusters.

- Distribution matters as much as quality: Publishing to four platforms reaches different communities and use cases. Document well for each audience.

- Iteration beats perfection: Ship early, collect feedback, iterate. Version 2.0 improvements came from actual user usage, not speculation.